Microsoft Research: ‘Code Space’ Combines Kinect With Display For Sharing (Developers)

November 11, 2011 No CommentsThe Microsoft Research team always comes up with the greatest projects and ideas. Their new project ‘Code Space’ doesn’t disappoint as it looks to connect devices by using Kinects and hand gestures.

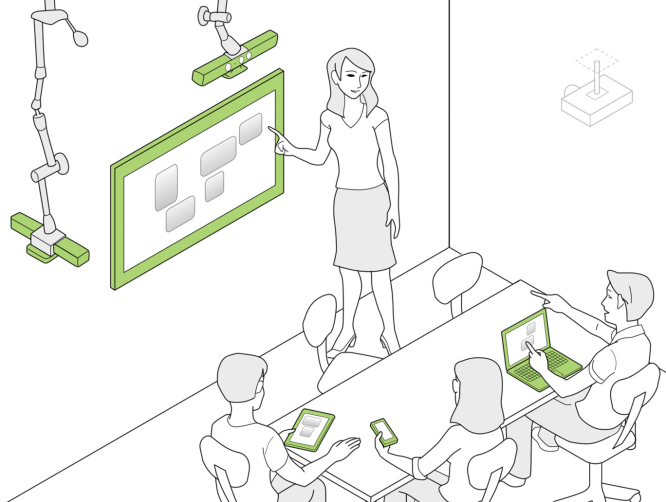

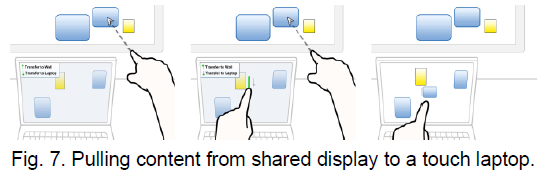

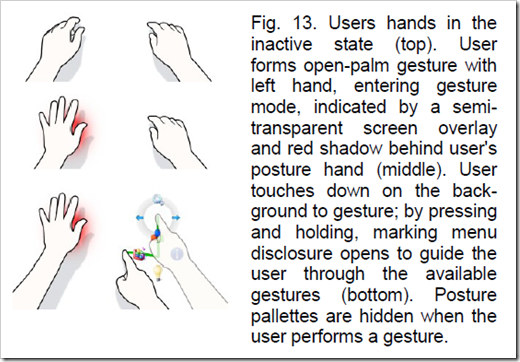

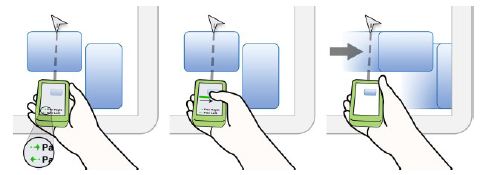

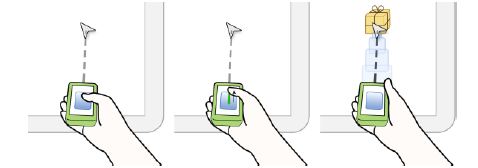

We present Code Space, a system that contributes touch + air gesture hybrid interactions to support co-located, small group developer meetings by democratizing access, control, and sharing of information across multiple personal devices and public displays. Our system uses a combination of a shared multi-touch screen, mobile touch devices, and Microsoft Kinect sensors. We describe cross-device interactions, which use a combination of in-air pointing for social disclosure of commands, targeting and mode setting, combined with touch for command execution and precise gestures. In a formative study, professional developers were positive about the interaction design, and most felt that pointing with hands or devices and forming hand postures are socially acceptable. Users also felt that the techniques adequately disclosed who was interacting and that existing social protocols would help to dictate most permissions, but also felt that our lightweight permission feature helped presenters manage incoming content.

This specific project has been aimed at sharing code and information in developer meetings using a large display and simple in air hand gestures.

In air interactions with gestures allow users to take contect from the display onto devices like Latops, tablets and Smartphones.

Below are some in air gestures using a smartphone (hopefully Windows Phone)

Although this particular project is aimed at developers, I can easily see something like this branching out to business meetings and schools of the future. A perfect way to share content within a small group of people.

Source: Channel9

News